We need less reliance on API documentation automation and more reliance on ourselves.

This article is based on my API the Docs (Chicago, April 2019) presentation of the same name.

By Robert Delwood

Lead API Documentation Writer

[Home] [Writing samples] [LinkedIn]By Robert Delwood

We're punch drunk. The API documentation writing community is punch drunk on automation, and that's a problem in general. The concept of a computer going into a code database, pulling out function names, parameters, and then formatting those with JSON or HTML is hardly a revolutionary idea now. And yet that's all CEOs see. I use CEOs to personify the causes of most of problems, although later I'm going to blame us writers some. CEOs see that a small group of writers can support a large group of programmers. The problem in specific is that they hire the minimal number of writers, who, along with automation, can write documentation. The API reference guide documentation, that is. And that doesn't benefit anyone.

The goal here is producing great documentation. I define that simply: Instill 100% confidence 100% of the time.

Programming is nothing if not uncertainty. Even if a programmer has used a call dozens of times, there will still be uncertainty. The core of this is Thinking Like a Programmer, and this will be a reoccurring theme here. That means to understand what's important to programmers and then to anticipate their questions. For example, when first using a call, they will copy and paste it into their code. The immediate goal is a get a clean compile. After that, they start tweaking parameters trying to understand the nuances. Finally, they look at the larger picture and make calls from it, or have it accept calls. This process is called playing with a call, and it's fundamental to their process. If as writers we understand this, we can then anticipate this, and then write our documentation to address these issues ahead of time. By preempting their questions, we're on our way to instilling 100%.

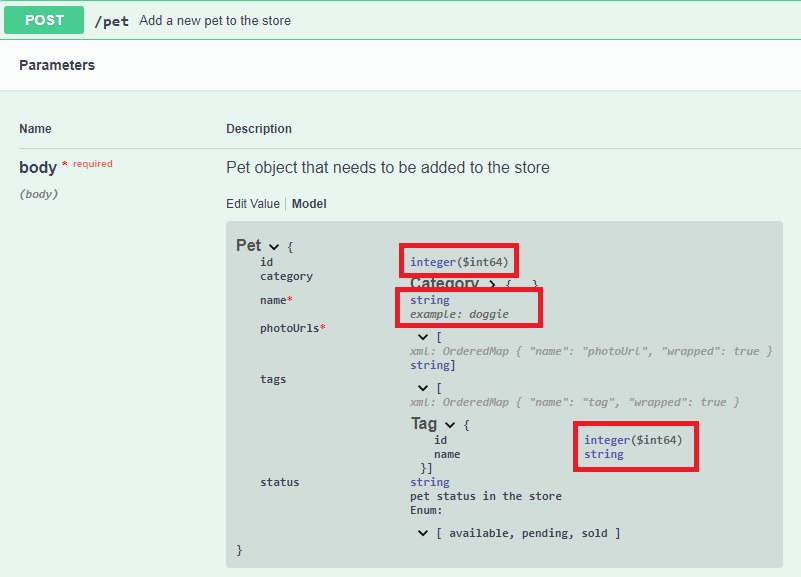

But computers don't write documentation, people do. If this article ended here, the point would have been made. It's just the quality of the documentation that's the issue. The following is an example from Swagger's online pet store and shows some of the questions that come up.

Description. This is a one line description. If your application is no more complicated than a pet store, you might get away with one line. However, most commercial applications are more complex so that call and parameter descriptions need much more than one line. It is common that a paragraph or more is needed, along with examples and code snippets. I think a programmer wrote this example; a writer would have said it differently. Not to get into the editing issue but they didn't even bother to use a period.

Id. It seems like a serial number, but that raises questions. As it's presented, the caller passes in this value, but most databases automatically provide a serial number, called the key value. Programmers are used to having this value provided for them. So not only do we have to write for what programmers don't know, for example, this entire call will be new to them, but we also have to write for what they do know. If the call is different from their past experiences, we have to explain that to them. This last point is something that an experienced programmer would know, maybe even assume, but would not be obvious to a less experienced writer. Instilling 100% confidence is a daunting task indeed.

Then notice the data type: int64. This is a very large number, from 9 x 1018 to -9 x 1018. If it gets converted to an unsigned value, that's still 0 to 18 x 1018. Either way, it's an 18 quintillion range. Why does the number need to be so large? Other questions:

Name. This is presumably the pet's name. They finally provide an example but it's one of the stupidest examples: doggie. While it's a legitimate value (a string), it does a poor job showcasing what the parameter does. A pet's name is Rover, Charlie, and Data in Star Trek had a cat named Spot. My criticism of this example is that it's neither a good name, nor even an example of a category name. Dog, or cat would be examples of that. Doggie doesn't explain anything. The whole point of an example is to show what the parameter does and with a value real enough that they could use that value literally. Kind of Documents

When talking about API documents, what is the first kind that comes to mind? The API reference guide. That is the community's iconic document. But it's not the only document. It can't be. I always equate an API reference guide to a dictionary. All the words in a great novel are in the dictionary. All the words in a bad novel are in the same dictionary. So clearly just having a dictionary doesn't make you a great writer. You have to know grammar, the syntax, usage, and so on. The same is true for APIs. Just knowing the calls isn't enough. You need to know the calling sequences, how everything interacts, and how to incorporate the calls with the language you're using. That means you'll need other forms of documentation:

In this regard, the API reference guide now seems almost incidental. And yet, these other guides are rarely produced.

For example, in a writers meeting once, a group of three experienced API documentation writers, and I'm including myself, were showing our work. I noticed two things. First, at some point each of us apologized for something in our products. Either some feature was missing, the UI looked bad, or something wasn't working correctly, and we each had the same excuse: We didn't have time to get to that, or it wasn't a priority. The second thing was that we were asked how many examples we had. We looked at each other sheepishly because we knew the answer. The most any of us had were three samples. The direct opposite of what it should have been. If automation is so good, it should to free us up to write the other documentation. The kind of documentation that computers can't write for us. Remember earlier I said the problem was the CEOs hire the minimal number of writers, who, along with automation, can write the API reference guide. That means, writers have the Sisyphean task of writing only the API reference guide and can never get to the other documents.

To see if any of this really matters, try this test.

Do you still think a getting started paper is unnecassary?

Hopefully already I've dispelled the notion that automation can do everyone's job for them for them. The opposite is actually true and there is only a little that it can help with. Even within that space, there are things a computer can't do for you. Seven things to be specific.

We're entering a programmer's world, so why does anyone think it isn't all about programming? We need to know their jargon, the languages, their humor even, but most importantly, we need to think like programmers. For starters, the following two translations may be amusing.

We may even think we know what it means, but that isn't the point. I exaggerate but this is how programmers feel when reading documentation written by non-programmers. The terms aren't used as a programmer would use them, the samples are a little off, and in general, it sounds off. In either case, be it from the sign translators or, here, the technical writers, our readers have lost confidence from the very first sentence.

If we are to instill 100% confidence, it begins by writing in a voice and tone they're familiar with. The same is true for all other aspects. One of our missions is ease the burden from our developers. Understand that we're a garden variety annoyance to them. They know they have to stop to answer some of our questions. It becomes a special annoyance when they have to stop to explain something we should already know. We need to write sample code, sample projects, code snippets, be able to explain all of this. That takes a programmer. The problem is programmers are notoriously bad writers, even if they had the interest in doing that. Programmer-writers are perhaps ideal, trained technical writers who know programming, but those are hard to find.

This isn't bad news for technical writers. We absolutely need writers in this field, and it's convenient to recruit them as technical writers. It's with the understanding, though, that they learn programming. The good news is it isn't as hard as it sounds. First, the work is on a direct continuum, in that if you know a little programming, you're a little effective, and a more programming makes you more effective. So as they grow into the title, they become more effective along the way. Second, most basic programming concepts are universal to all languages. If you learn looping in one language, it can be applied to others.

To make our developers love us, we have to appeal to their most base emotion: Laziness. So whatever you do, make it easy for them. That means you may have to go out of your way to make that happen.

Developers don't like doing anything twice, and they like copy and paste most of all. So whatever you include for them, keep those two in mind. This gets back into Thinking Like A Programmer. For instance, put a simple code sample, which is what they want anyhow, near the top of the Web page, so it's the first thing they see, and that the don't have to scroll down.

Another common example is many writers put a cURL sample on the page. This will be talked about later, but cURL is a notation that encapsulates a REST call into one line for use in a command line window for testing. Useful, but it's not how developers will use that REST call. So provide some examples of the call in other languages, such as Python, or C#. But even that's not the end. There's probably not less than a dozen ways to make a REST call. If you know how your developers actually make the call, take that extra step and do it for them.

All of this is often more work on us writers upfront but it's more convenient it is for them, the more they like it. And that's the point.

This one can't be understated. If I thought I could pull off an API guide with using only examples, I would.

Samples serve two purposes. First, it follows the "a picture is worth a thousand words" cliche, and each one can often demonstrate or showcase an idea or procedure more precisely than describing it in a narrative. Second, they appeal to the programmer's laziness. In Thinking Like a Programmer, they commonly know what call they need and just want to copy and paste it to move along.

Include samples and lots of them. These could be the code for making the call, or the JSON needed for the body. There's a too common approach that limits the samples on an API reference guide page to just one. Use as many as needed, perhaps following a progression from simple to complicated, or showing a diversity of options. Put the simple samples up front, above the fold so programmers don't have to scroll down.

cURL (pronounced like it looks: curl, and means "client URL"), is a development tool that includes a library for making internet calls, and command line tool. It's a capable tool, but many writers and developers use it only for testing REST calls. It's notable characteristic is that it encapsulates an entire REST call in one line that can be copied and pasted to a command line. The results of the call display in the command window. This allows users to try the call ahead of time, changing the parameters and seeing the resulting JSON. That makes it a testing tool. And it's not even the best testing tool available. The notation is popular and many automated API documentation systems automatically include it as a sample. But it's not how programmers are going make the call in their projects. If it's easy to include in the project, yes, include it. However, don't have it as the only sample. Many automated generators can also provide other language samples such as Python, and C#. As mentioned in "We're here for their convenience, not yours," if you know how your developers make their calls, take the extra step and include that as well.

This is summed up best as: Review, review, review. It's a newbie mistake thinking you're ever done writing. Review your documents constantly. This is for three reasons.

Remember, we're here for their convenience, not yours. Repeat ad nauseam.

I have always maintained that technical communicators are among the most put upon groups. Specifically, we'll never get the tools we need. Earlier I pointed out that CEOs are mostly to blame. But for this issue, we have no one to blame but ourselves. So as a group, we have to ask one question: What can we do to get more tools? Simple, we just have to ask. We can do this in three ways.

We think that one person can't change an industry. Perhaps not, but organize the collective and the companies will respond.

We may get paid by the company but we work for our clients. We need to be advocates for our clients. We're in a position to be aware of our company's strengths and weaknesses. If there's a gap in the REST calls, suggest it gets filled. Become testers of the products. Enter a number where a character should go. Try to break the product and take pride in doing it faster than anyone else. Be vocal and say if a procedure is awkward. All of this may put us at odds with our developers but so what? It becomes a better product. Do what's right for the clients.

API document automation is overstated. The API automation tools isn't about writing, it's about programming. They're good at automating API programming. A closer look at those tools show that writing is an after thought. MuleSoft, one of the leaders in this field, hasn't changed their writing editor in seven years, but yet have come out with three versions of their API suite. Text is written by people. It's our own fault that no one is saying so, but those tools are making us go backward. We need to say so now and fix that.